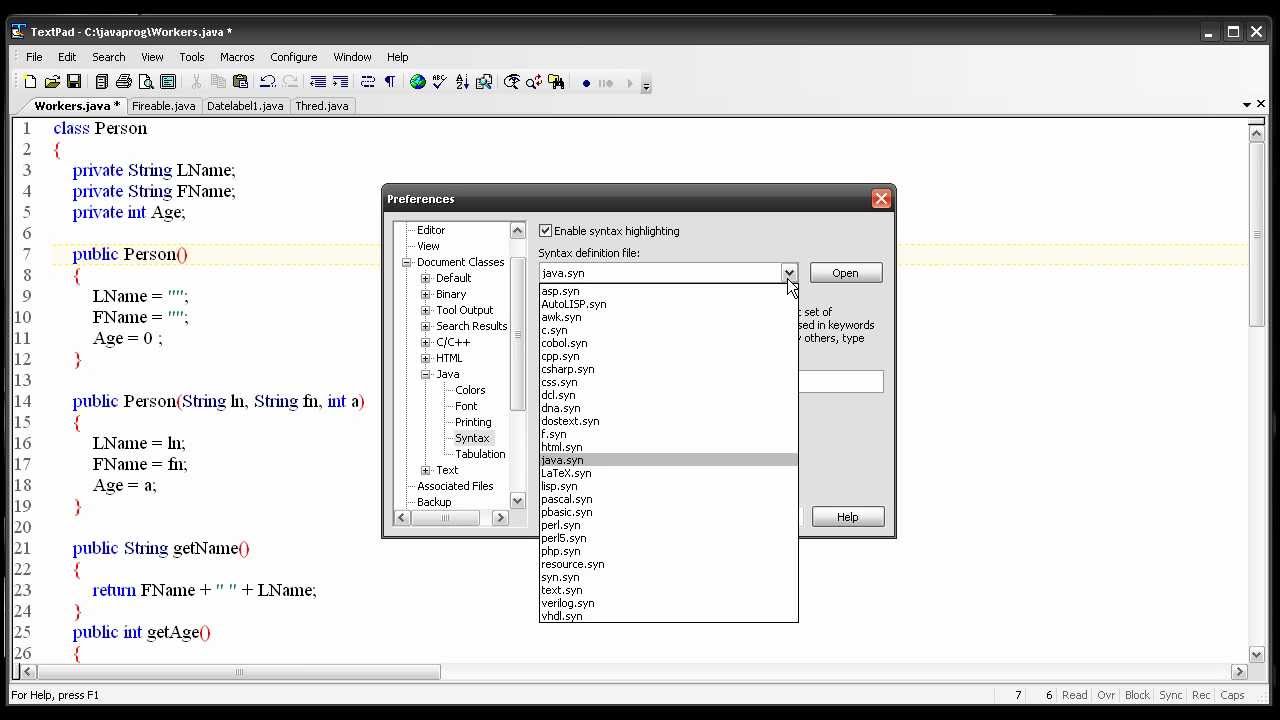

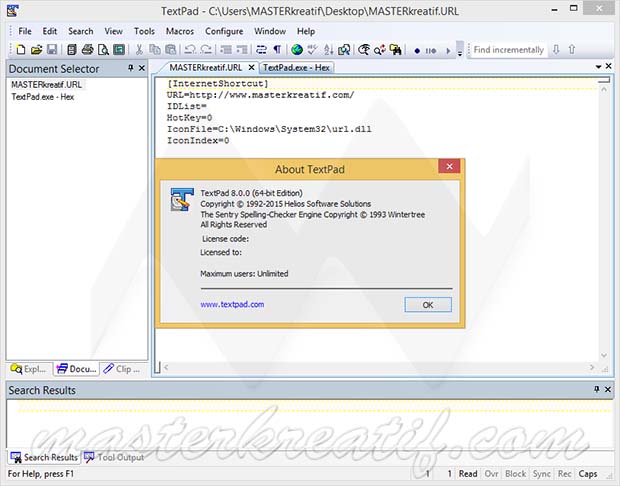

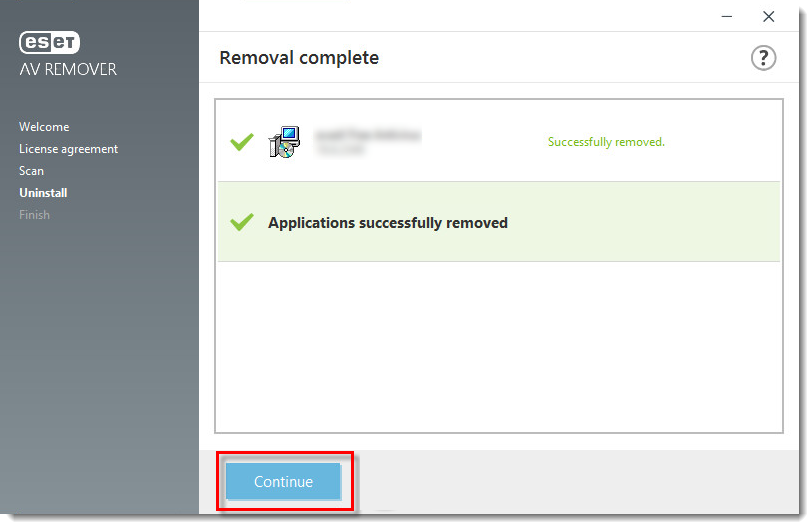

If it is, you are doing something wrong while creating the robots.txt file. If the BOM doesn’t show up, you’re good to go. Once you make the changes, then use the W3C internationalization tool to check the revised file. I’m a big Textpad fan, but there are many others you can use as well. You should be using a pure text editor for creating your robots.txt file. When saving the file, ensure that BOM is not selected (some text editor programs have an option for adding the BOM).Īlso, make sure you’re not using a word processing application like Microsoft Word for creating your robots.txt file. I recommend using a text editor like Textpad to create your new robots.txt file.

Uninstall textpad 8 how to#

How to fix UTF-8 BOM in your robots.txt file:įixing the issue is pretty easy. If you see that in the results, you know what the problem is (which is great). The tool will return the results, which will include a line about UTF-8 BOM. Next, click “Choose File” and select your robots.txt file. Next, click the tab labeled “By File Upload”:Ĥ. This tool will enable you to upload your robots.txt file and check for the presence of UTF-8 BOM.ģ. Next, visit the W3C Internalization Checker. If so, there’s a good chance you’ve got the UTF-8 BOM situation I’ve been explaining.Ģ. When you view the report, does it look like the screenshot above? Is the first line showing a red X next to it? If so, hover over the x and you might see a hint that says, “Syntax not understood”. First, fire up GSC and use the robots.txt Tester. Don’t worry, I’ll quickly walk you through how to check your robots.txt file now.ġ. Maybe you’ve seen problems with your robots.txt file and subsequent indexation, and you’re now wondering if UTF-8 BOM is the problem. Right now, you might be sweating a little.

And that could include quality problems, as well, depending on what is being crawled and indexed. This can lead to all sorts of nasty SEO problems. And that means many urls that shouldn’t be crawled are being crawled (and many are being indexed). Needless to say, all of the directories that should be disallowed are not being disallowed. And if you’re trying to disallow key areas of your site, then that could end up as a huge SEO problem.įor example, here’s what a robots.txt file looks like in GSC when it contains UTF-8 BOM: And when they are seen as errors, Google will ignore them. And when there’s no user-agent, all the other lines will return as errors (all of your directives). And that means the first line (often user-agent), will be ignored. What can happen to a robots.txt file when UTF-8 BOM is present?Īs mentioned above, when your robots.txt file contains the UTF-8 BOM, Google can choke on the file. Actually, the UTF-8 BOM can make your robots.txt file, well, bomb… Sorry for the play on words here, but I couldn’t resist. And the BOM can cause serious problems when Google tries to read the file.

Some programs will add the BOM to a text file, which again, can remain invisible to the person creating the text file. It’s an invisible character that’s located at the start of a file (and it’s essentially meaningless from an SEO perspective). So, if your robots.txt file is bombing, and you are still scratching your head wondering what’s going on, then this post is for you.īOM stands for byte order mark and it’s used to indicate the byte order for a text stream. But the results are extremely visible (and can be alarming).īelow, I’ll cover what UTF-8 BOM is, how it can impact your robots.txt file, how to check for it, and then how to fix the problem. Unfortunately, I’ve come across this issue many times during audits and while helping companies with technical SEO. It’s called the UTF-8 BOM and I’m going to explain more about that in this post.

Sure, it’s an invisible character, but a character nonetheless. So why does this sinister robots.txt problem happen? It often comes to down a single character. When that happens, it can have a big impact on SEO (especially on large-scale sites with many urls that should never be crawled). You think all is good, but you find that directives are not being adhered to, maybe a boatload of urls that are being crawled that shouldn’t be, etc. For example, maybe you add your directives, a sitemap file or sitemap index file, and then upload it to your site. I’ve written in the past about how a robots.txt file could look fine, but actually not be fine.

0 kommentar(er)

0 kommentar(er)